Реализация AI-системы персонализации программы лояльности

Стандартная программа лояльности: купил на 1000 рублей — получил 10 баллов. AI-персонализация превращает это в: "Маше, которая любит кофе и покупает по утрам, предлагаем двойные баллы в кофейном разделе по будням до 10:00". Персонализированные программы лояльности показывают на 2-3x больший engagement и удержание.

Персонализация начисления баллов

from anthropic import Anthropic

import pandas as pd

import numpy as np

from sklearn.ensemble import GradientBoostingClassifier

from dataclasses import dataclass

from typing import Optional

import json

@dataclass

class LoyaltyOffer:

user_id: str

offer_type: str # double_points, bonus_category, threshold_bonus, streak_reward

category: Optional[str]

multiplier: float

min_purchase: Optional[float]

valid_hours: Optional[tuple]

valid_days: Optional[list]

description: str

expected_lift: float

class LoyaltyPersonalizationEngine:

def __init__(self):

self.llm = Anthropic()

self.engagement_model = None

self.user_preferences = {}

def train_engagement_model(self, offers_history: pd.DataFrame):

"""

offers_history: user_id, offer_type, category, multiplier,

was_shown, was_used, purchase_uplift

"""

features = self._extract_offer_features(offers_history)

X = features.drop(columns=['was_used'])

y = features['was_used']

from sklearn.model_selection import cross_val_score

self.engagement_model = GradientBoostingClassifier(

n_estimators=200, learning_rate=0.05, random_state=42

)

cv_scores = cross_val_score(self.engagement_model, X, y, cv=5, scoring='roc_auc')

self.engagement_model.fit(X, y)

print(f"Engagement model AUC: {cv_scores.mean():.3f} ± {cv_scores.std():.3f}")

def _extract_offer_features(self, df: pd.DataFrame) -> pd.DataFrame:

features = pd.DataFrame()

features['multiplier'] = df['multiplier']

features['has_category_restriction'] = (df['category'].notna()).astype(int)

features['has_time_restriction'] = df.get('valid_hours', pd.Series([None] * len(df))).notna().astype(int)

features['user_avg_purchase'] = df.get('user_avg_purchase', 500)

features['user_purchase_frequency'] = df.get('user_purchase_frequency', 2)

features['user_category_match'] = df.get('user_category_match', 0.5)

features['was_used'] = df.get('was_used', 0)

return features

def generate_personalized_offers(self, user: dict,

n_offers: int = 3) -> list[LoyaltyOffer]:

"""Генерация персонализированных предложений"""

# Анализ предпочтений пользователя

top_categories = user.get('top_categories', [])[:3]

preferred_hours = user.get('preferred_purchase_hours', [10, 11, 12, 18, 19, 20])

avg_basket = user.get('avg_order_value', 500)

tier = user.get('loyalty_tier', 'bronze')

# Генерация кандидатов

candidates = []

# Категорийные бонусы

for category in top_categories[:2]:

multiplier = 2.0 if tier == 'bronze' else 1.5

candidates.append(LoyaltyOffer(

user_id=user['user_id'],

offer_type='double_points',

category=category,

multiplier=multiplier,

min_purchase=None,

valid_hours=None,

valid_days=None,

description=f"×{multiplier} баллов в категории {category}",

expected_lift=0.0

))

# Пороговый бонус

threshold = round(avg_basket * 1.3 / 100) * 100

candidates.append(LoyaltyOffer(

user_id=user['user_id'],

offer_type='threshold_bonus',

category=None,

multiplier=1.5,

min_purchase=threshold,

valid_hours=None,

valid_days=['Mon', 'Tue', 'Wed', 'Thu'],

description=f"+{int((threshold * 1.5 - threshold) * 0.01)} баллов за покупку от {threshold}₽",

expected_lift=0.0

))

# Временной бонус

if preferred_hours:

peak_hour = max(set(preferred_hours), key=preferred_hours.count)

candidates.append(LoyaltyOffer(

user_id=user['user_id'],

offer_type='time_bonus',

category=None,

multiplier=2.0,

min_purchase=None,

valid_hours=(peak_hour, peak_hour + 2),

valid_days=None,

description=f"×2 баллов с {peak_hour}:00 до {peak_hour+2}:00",

expected_lift=0.0

))

# Streak reward

current_streak = user.get('consecutive_weeks_with_purchase', 0)

if current_streak >= 2:

candidates.append(LoyaltyOffer(

user_id=user['user_id'],

offer_type='streak_reward',

category=None,

multiplier=3.0,

min_purchase=None,

valid_hours=None,

valid_days=None,

description=f"Поддерживайте серию! ×3 баллов за {current_streak+1}-ю неделю подряд",

expected_lift=0.0

))

# Скоринг и выбор лучших

if self.engagement_model:

scored = self._score_candidates(candidates, user)

return scored[:n_offers]

return candidates[:n_offers]

def _score_candidates(self, candidates: list[LoyaltyOffer],

user: dict) -> list[LoyaltyOffer]:

"""Скоринг кандидатов через ML-модель"""

features_list = []

for offer in candidates:

user_cats = user.get('top_categories', [])

category_match = 1.0 if offer.category in user_cats else 0.3

features_list.append([

offer.multiplier,

int(offer.category is not None),

int(offer.valid_hours is not None),

user.get('avg_order_value', 500),

user.get('purchase_frequency_monthly', 2),

category_match

])

probs = self.engagement_model.predict_proba(features_list)[:, 1]

for offer, prob in zip(candidates, probs):

offer.expected_lift = float(prob)

return sorted(candidates, key=lambda x: x.expected_lift, reverse=True)

def generate_offer_message(self, offer: LoyaltyOffer, user: dict) -> str:

"""AI-генерация описания предложения"""

response = self.llm.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=80,

messages=[{

"role": "user",

"content": f"""Write a brief, exciting loyalty offer message (1 sentence, emoji allowed).

Offer: {offer.description}

User name: {user.get('first_name', '')}

Loyalty tier: {user.get('loyalty_tier', 'bronze')}

User's favorite: {user.get('top_categories', [''])[0] if user.get('top_categories') else 'shopping'}"""

}]

)

return response.content[0].text

Геймификация программы лояльности

class LoyaltyGamification:

"""Игровые механики для повышения вовлечённости"""

def get_user_progress(self, user: dict) -> dict:

"""Текущий прогресс и следующие достижения"""

current_points = user.get('points_balance', 0)

current_tier = user.get('loyalty_tier', 'bronze')

tier_thresholds = {

'bronze': 0, 'silver': 1000, 'gold': 5000, 'platinum': 20000

}

# Следующий уровень

tiers = list(tier_thresholds.items())

current_idx = next(i for i, (t, _) in enumerate(tiers) if t == current_tier)

next_tier = tiers[current_idx + 1] if current_idx + 1 < len(tiers) else None

progress = {

'current_tier': current_tier,

'current_points': current_points,

'streak_weeks': user.get('consecutive_weeks_with_purchase', 0),

'achievements': user.get('achievements', [])

}

if next_tier:

points_needed = next_tier[1] - current_points

progress['next_tier'] = next_tier[0]

progress['points_to_next_tier'] = max(0, points_needed)

progress['progress_pct'] = min(100, (current_points - tier_thresholds[current_tier]) /

(next_tier[1] - tier_thresholds[current_tier]) * 100)

return progress

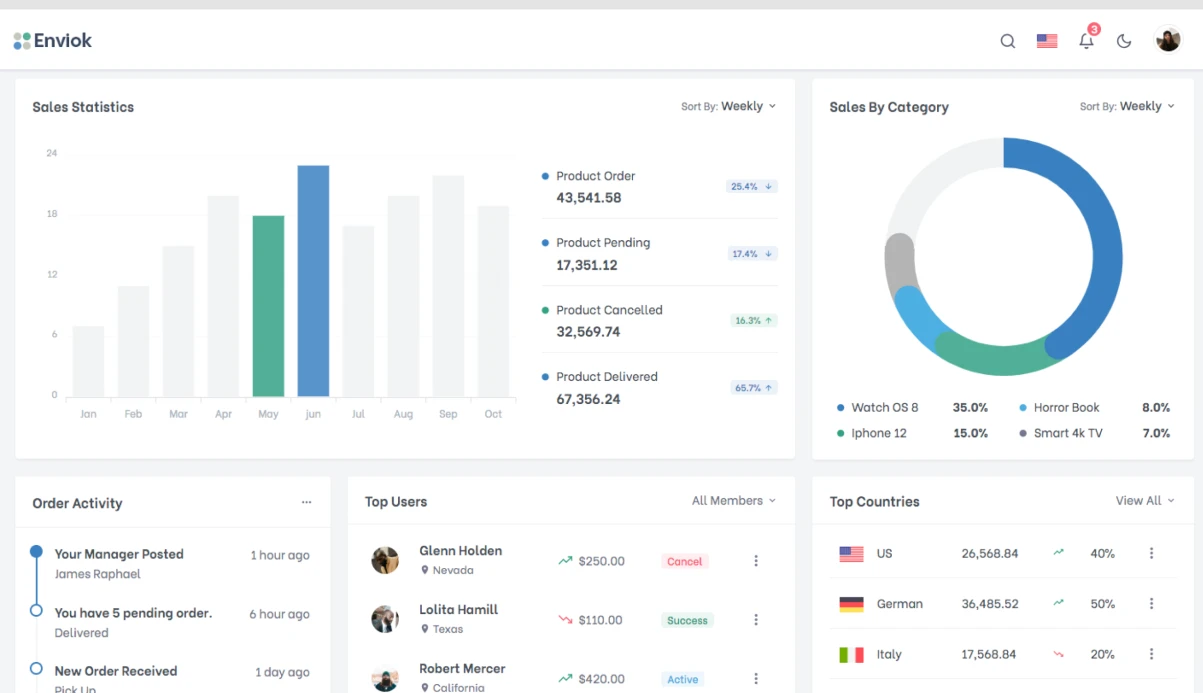

Персонализированные программы лояльности показывают: +40-60% к redemption rate баллов, +25-35% к частоте покупок у участников, NPS loyalty program members на 15-20 пунктов выше среднего. Ключевые метрики для оптимизации: redemption rate (целевой >40%), active member rate (>60% за 90 дней), points liability (следить за стоимостью начисленных баллов).